Kenny | 22 March 2021

This is the second blog in our blog series where we dive deep into the CNCF Sandbox. In our last post we showcased KubeVirt to run a Kubernetes cluster within a Kubernetes cluster. This time we’ll be exploring how to integrate chaos engineering into the test suite of our Helm charts with LitmusChaos.

Chaos engineering is the practice of performing experiments on your systems (e.g. applications) to identify whether or not your system is resilient, and LitmusChaos is a tool that makes it possible to perform chaos engineering within a Kubernetes cluster. To be specific it is a toolset developed to help Kubernetes developers and Site Reliability Engineers (SREs) practice chaos engineering with Kubernetes. Developers would run the chaos experiments during development as part of unit testing or integration testing (or as part of a CI pipeline), whilst SREs would run the chaos experiments against the application and/or the surrounding infrastructure. Both sets of users using chaos engineering with Litmuschaos will lead to resilient infrastructure and applications being developed.

Litmuschaos makes this possible by extending the Kubernetes API with additional Custom Resource Definitions (CRDs) that can be used to create, manage and monitor the chaos experiments. The three main CRDs are:

Along with these CRDs is a Litmuschaos Kubernetes Operator that will search for active ChaosEngines and will invoke the ChaosExperiments declared in the engine.

You can create your own custom chaos experiments to use, but the LitmusChaos team have created a list of generic/common experiments that can be used. The experiments can be found in the ChaosHub. Some examples of common experiments are:

This demo will be using one of the provided experiments to fail an application’s pod as part of a Helm chart test suite.

When you package your Kubernetes application into a Helm chart you may also choose to include a test suite for the Helm chart to validate your deployed Helm charts.

A good use case for this is to perform a health check against your Kubernetes application (deployed via a Helm chart). However, imagine you have an application that is fully functional when it’s initially deployed but loses some (or all) of its functionality when a disaster strikes. For example, can we be confident that our application will still be fully functional if a pod crashes and restarts?

Ideally, we’d want a test suite that can also prove that an application is resilient and by packaging LitmusChaos experiments into a Helm chart test suite, it should be possible to create a test suite that can prove an application is resilient within a Kubernetes cluster.

Before going over the implementation details, I want to provide an overview of the workflows we’ll be combining, followed by the new workflow.

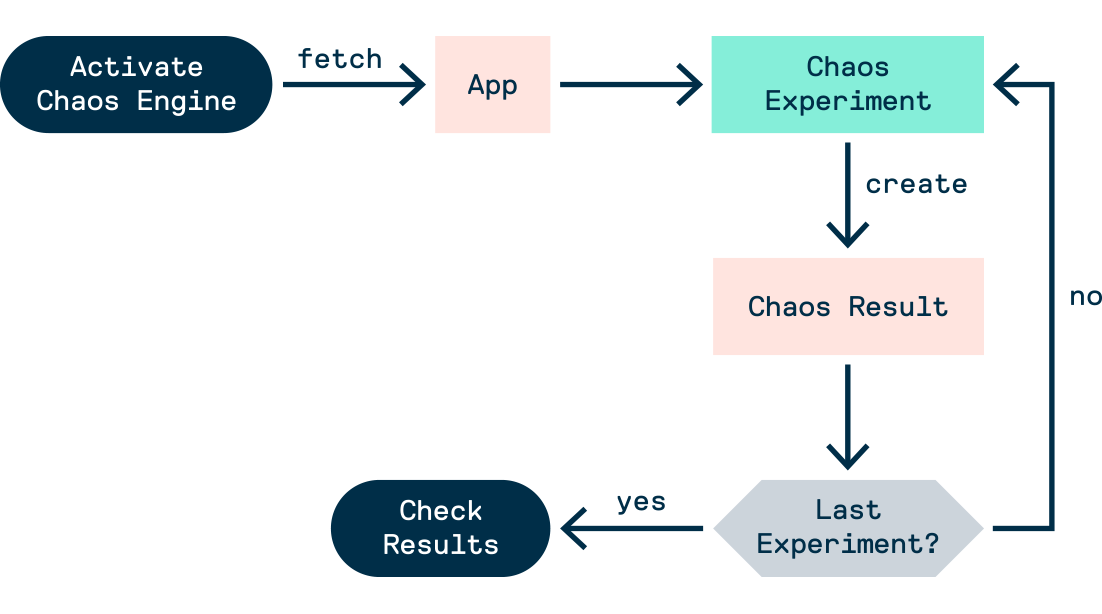

Assuming the litmuschaos operator is deployed, the workflow of a chaos experiment (via terminal only) is as follows:

ChaosExperimentsChaosEngine which declares which ChaosExperiments to useChaosResults objects are createdChaosEngine and ChaosResults objects to discover the results of the experimentsNote: Deployments can be done with the kubectl apply -f command

Packaging the chaos experiments into a Helm chart test suite will improve this workflow. Some benefits include:

So including the experiments in a Helm chart test suite will not only improve the test suite it’ll also improve the workflow of the chaos experiments. A win-win for both tools.

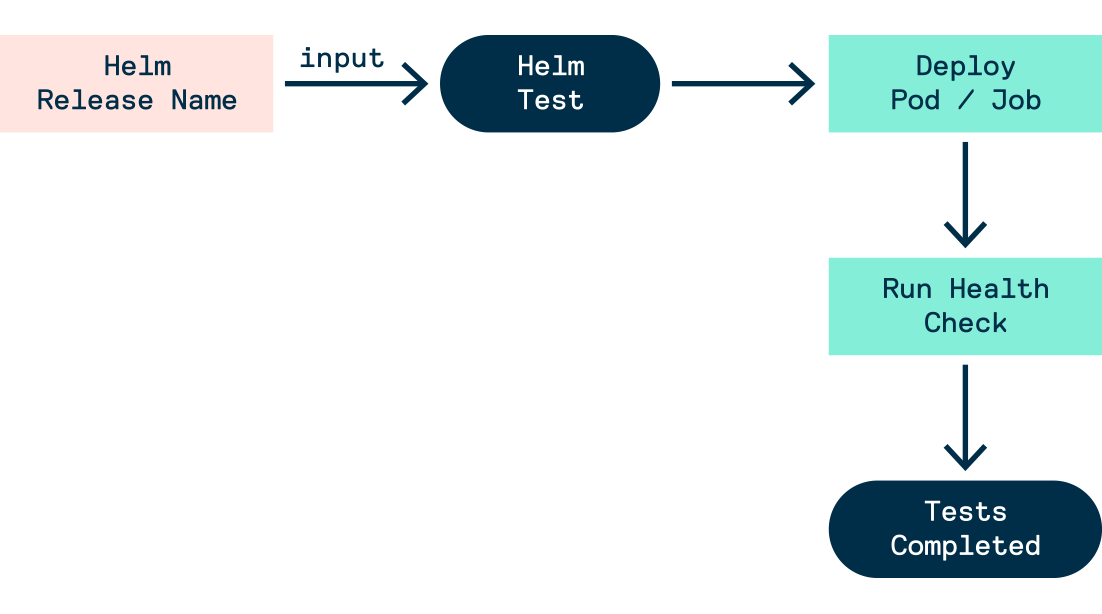

Now, we’ll go over the workflow for a Helm chart test suite.

Tests in a helm chart live in the templates/ directory. Therefore, when creating a helm chart we need to create a template for a Pod and Job with the helm.sh/hook: test annotation. This annotation declares that the manifest file should be run/deploy when it’s time for Helm’s test hook to be invoked, which is when the helm test command is run.

The workflow for running a Helm test suite is:

helm.sh/hook: test annotation will not be deployed)helm test command to invoke the test hookhelm.sh/hook: test annotation will be deployed, this includes the Pod(s) and/or Job(s) that will run the containers with the testsexit 0) then all tests passed.

With chaos experiments, the result of the experiments can be used to determine how the container should terminate.

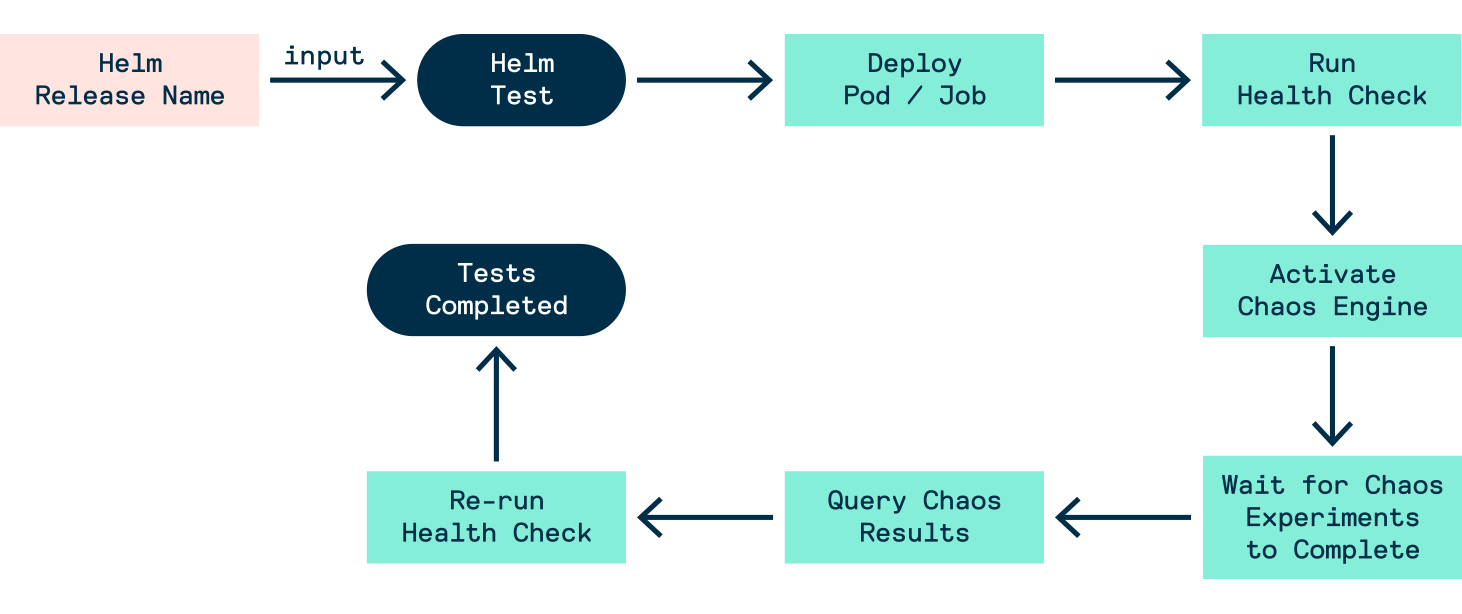

When we package LitmusChaos experiments into a Helm chart test suite, we can achieve the following workflow:

ChaosExperimentshelm test RELEASE_NAME --logs --debugPod or Job object is deployed, which will set the ChaosEngine to active and then go through 3 phases

Now the question is how do we create this new workflow?

First, we’ll need the prerequisites:

ChaosEngine and the necessary RBAC objects run the experiments should be deployed as part of the helm chartChaosEngine's engineState should be stopped by stop by defaultPod/Job) must:

bash, curl and jqhelm.sh/hook: testGET and PATCH the ChaosEngines results via the Kubernetes API (i.e. the necessary RBAC objects should be deployed as part of the helm chart)With the prerequisites set up, we now need to define the container within the Pod/Job that will execute the tests. The tests will be in one bash script and will involve repeatedly running the curl command against the kubernetes.default.svc DNS name, which is the recommended approach to querying the API within a pod. With these curl commands, we’ll be able to patch and query the ChaosEngine.

At the start of the script, we’ll need to fetch the token of the service account within the pod. We will need this token to run our curl commands with the correct permissions assigned to the service account.

KUBE_TOKEN=$(cat /var/run/secrets/kubernetes.io/serviceaccount/token)

curl -sSk -H "Authorization: Bearer $KUBE_TOKEN" $URL

The URL follows the convention https://kubernetes.default.svc:443/apis/$GROUP_NAME/$VERSION/$NAMESPACE/$RESOURCE_TYPE/$RESOURCE_NAME

In this case:

litmuschaos.iov1alpha1chaosengineThe output of the curl command would be a description of the deployed ChaosEngine - which includes the engine status, the chaos experiments and the results of the experiments.

To start the experiment by patching the ChaosEngine we’ll need to modify the curl command to:

Content-Type header to application/json-patch+jsonPATCHAn example is below:

curl -sSk -H "Authorization: Bearer $KUBE_TOKEN" -H "Content-Type: application/json-patch+json" --request PATCH --data '[{"op": "replace", "path": "/spec/engineState", "value": "active"}]' $URL

To stop the ChaosEngine you would set the value to stop instead of active.

Now that we’re aware of how to get a pod to query and patch the ChaosEngine we can define the following algorithm for our tests:

ChaosEngine and activate/trigger the experimentsChaosEngine until the experiments are completedChaosEngine and disable/stop the experimentsYou can find example code for this (and the helm chart) here. The gif below is a demonstration of the workflow in action.

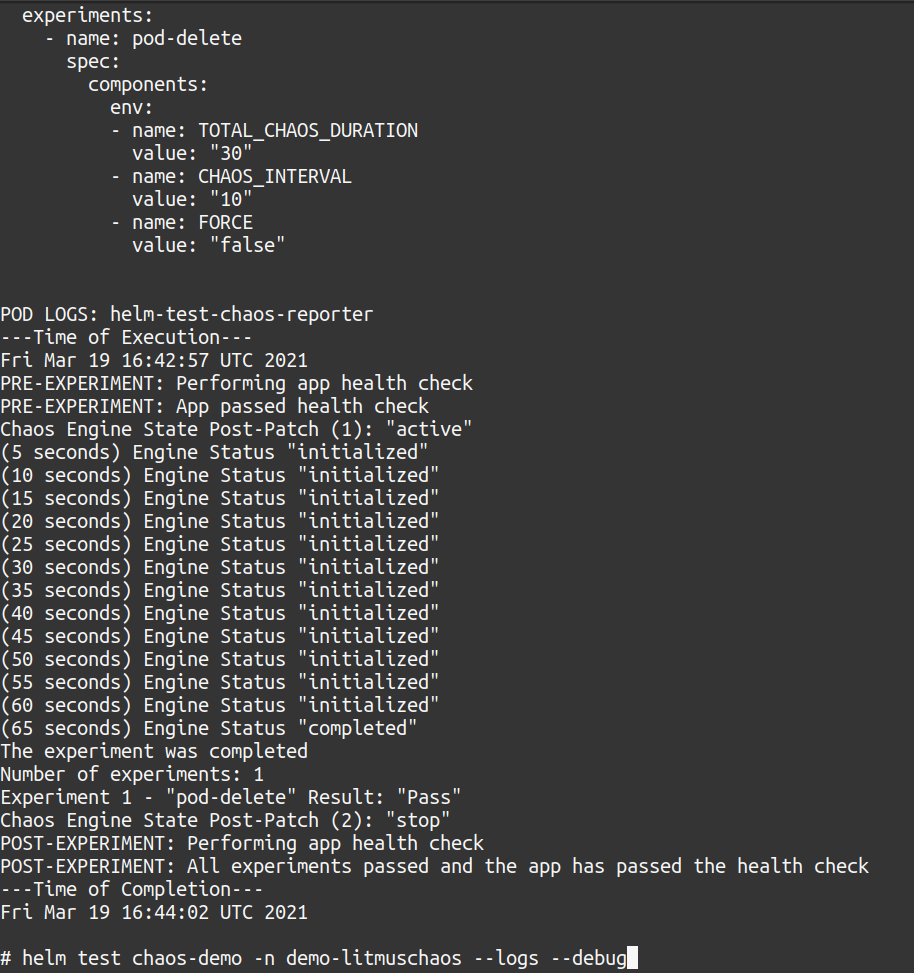

The experiment would take at least a minute to complete, so the gif was cut short. In the gif, you saw the logs for the tests were in the helm-test-chaos-reporter pod. If you used the --logs flag with helm test the logs will be shown as the output (alongside other details) of the helm test command once the test either passes or fails (The image below shows the tail end of the output).

This is as far as we’re going to go with LitmusChaos today, but by combining LitmusChaos with Helm we’re able to easily configure chaos experiments for specific Helm charts/releases (i.e. Kubernetes applications) and run them.

With this approach and the right chaos experiments Helm chart maintainers will be able to create a test suite to confirm the resilience of the Kubernete application deployed as part of a Helm chart and include this test suite within a CI pipeline that’ll be able to provide ample feedback to developers.

We’re looking forward to integrating this new workflow in our internal projects and exploring the other CNCF sandbox projects.